قارنت أجيال من العلماء الكون بلعبة عملاقة ومعقدة، مما يثير تساؤلات حول من يلعبها – وماذا يعني الفوز فيها.

إذا كان الكون لعبة، فمن الذي يلعبها؟

فيما يلي مقتطف من نشرتنا الإخبارية “ضائع في الزمكان”. في كل شهر، نسلم لوحة المفاتيح إلى فيزيائي أو عالم رياضيات ليخبرك عن أفكار مثيرة من زاويته في الكون. يمكنك الاشتراك في “ضائع في الزمكان” مجانًا من هنا.

هل الكون لعبة؟ بالتأكيد اعتقد الفيزيائي الشهير ريتشارد فاينمان ذلك: “العالم أشبه بلعبة شطرنج عظيمة تلعبها الآلهة، ونحن مراقبون للعبة.” وبينما نراقب، مهمتنا كعلماء هي محاولة فهم قواعد اللعبة.

كما نظر عالم الرياضيات في القرن السابع عشر جوتفريد فيلهلم لايبنتز إلى الكون كلعبة، وحتى أنه مول تأسيس أكاديمية في برلين مخصصة لدراسة الألعاب: ”أنا أؤيد بشدة دراسة ألعاب المنطق ليس لذاتها ولكن لأنها تساعدنا على إتقان فن التفكير.“

يحب جنسنا البشري لعب الألعاب، ليس فقط كأطفال بل حتى في مرحلة البلوغ. يُعتقد أنها كانت جزءًا مهمًا من التطور التكاملي – لدرجة أن المنظر الثقافي يوهان هويزينغا اقترح أن نُسمى Homo ludens، أي الإنسان اللاعب، بدلاً من Homo sapiens. اقترح البعض أنه بمجرد أن أدركنا أن الكون محكوم بقواعد، بدأنا في تطوير الألعاب كوسيلة للتجربة مع نتائج هذه القواعد.

خذ، على سبيل المثال، واحدة من أولى ألعاب اللوحة التي ابتكرناها. تعود اللعبة الملكية لأور إلى حوالي 2500 قبل الميلاد وتم العثور عليها في مدينة أور السومرية، جزء من بلاد ما بين النهرين. تُستخدم النرد رباعي الأوجه لتسابق خمس قطع تنتمي لكل لاعب عبر تسلسل مشترك من 12 مربعًا. أحد تفسيرات اللعبة هو أن المربعات الـ 12 تمثل الأبراج الـ 12 التي تشكل خلفية ثابتة للسماء الليلية والقطع الخمس تتوافق مع الكواكب الخمسة المرئية التي لاحظها القدماء وهي تتحرك عبر السماء الليلية.

لكن هل يمكن اعتبار الكون نفسه لعبة؟ كان تحديد ما يشكل لعبة بالفعل موضوع نقاش حاد. اعتقد المنطقي لودفيغ فيتغنشتاين أن الكلمات لا يمكن تحديدها بتعريف قاموسي وأنها تكتسب معناها فقط من خلال الطريقة التي تُستخدم بها، في عملية أطلق عليها ”لعبة اللغة“. مثال على كلمة اعتقد أنها تكتسب معناها من خلال الاستخدام وليس التعريف هي كلمة ”لعبة“. في كل مرة تحاول فيها تعريف كلمة ”لعبة“، ينتهي بك الأمر بتضمين بعض الأشياء التي ليست ألعابًا واستبعاد أخرى كنت تقصد تضمينها.

كان الفلاسفة الآخرون أقل استسلامًا وحاولوا تحديد الصفات التي تعرّف اللعبة. يتفق الجميع، بما في ذلك فيتغنشتاين، على أن أحد الجوانب المشتركة لجميع الألعاب هو أنها محددة بقواعد. هذه القواعد تتحكم فيما يمكنك أو لا يمكنك فعله في اللعبة. لهذا السبب، بمجرد أن فهمنا أن الكون محكوم بقواعد تحدد تطوره، ترسخت فكرة الكون كلعبة.

في كتابه الإنسان واللعب والألعاب، اقترح المنظر روجر كايوا خمس سمات رئيسية أخرى تحدد اللعبة: عدم اليقين، عدم الإنتاجية، الانفصال، الخيال والحرية. فكيف يتطابق الكون مع هذه الخصائص الأخرى؟

دور عدم اليقين مثير للاهتمام. نحن ندخل لعبة لأن هناك فرصة لأي من الجانبين للفوز – إذا كنا نعرف مسبقًا كيف ستنتهي اللعبة، فإنها تفقد كل قوتها. لهذا السبب فإن ضمان استمرار عدم اليقين لأطول فترة ممكنة هو عنصر أساسي في تصميم الألعاب.

صرح العالم الموسوعي بيير-سيمون لابلاس بشكل مشهور أن تحديد إسحاق نيوتن لقوانين الحركة قد أزال كل عدم يقين من لعبة الكون: ”يمكننا اعتبار الحالة الحالية للكون نتيجة لماضيه وسبب مستقبله. ذكاء يعرف في لحظة معينة جميع القوى التي تحرك الطبيعة، وجميع مواقع جميع العناصر التي تتكون منها الطبيعة، إذا كان هذا الذكاء واسعًا بما يكفي لإخضاع هذه البيانات للتحليل، فإنه سيحتضن في صيغة واحدة حركات أعظم أجسام الكون وتلك الخاصة بأصغر ذرة؛ بالنسبة لمثل هذا الذكاء لن يكون هناك شيء غير مؤكد والمستقبل مثل الماضي يمكن أن يكون حاضرًا أمام عينيه.“

تعاني الألعاب المحلولة من نفس المصير. لعبة Connect 4 هي لعبة محلولة بمعنى أننا نعرف الآن خوارزمية ستضمن دائمًا فوز اللاعب الأول. مع اللعب المثالي، لا يوجد عدم يقين. لهذا السبب تعاني أحيانًا ألعاب الاستراتيجية البحتة – إذا كان أحد اللاعبين أفضل بكثير من خصمه، فهناك القليل من عدم اليقين في النتيجة. دونالد ترامب ضد غاري كاسباروف في لعبة شطرنج لن تكون لعبة مثيرة للاهتمام.

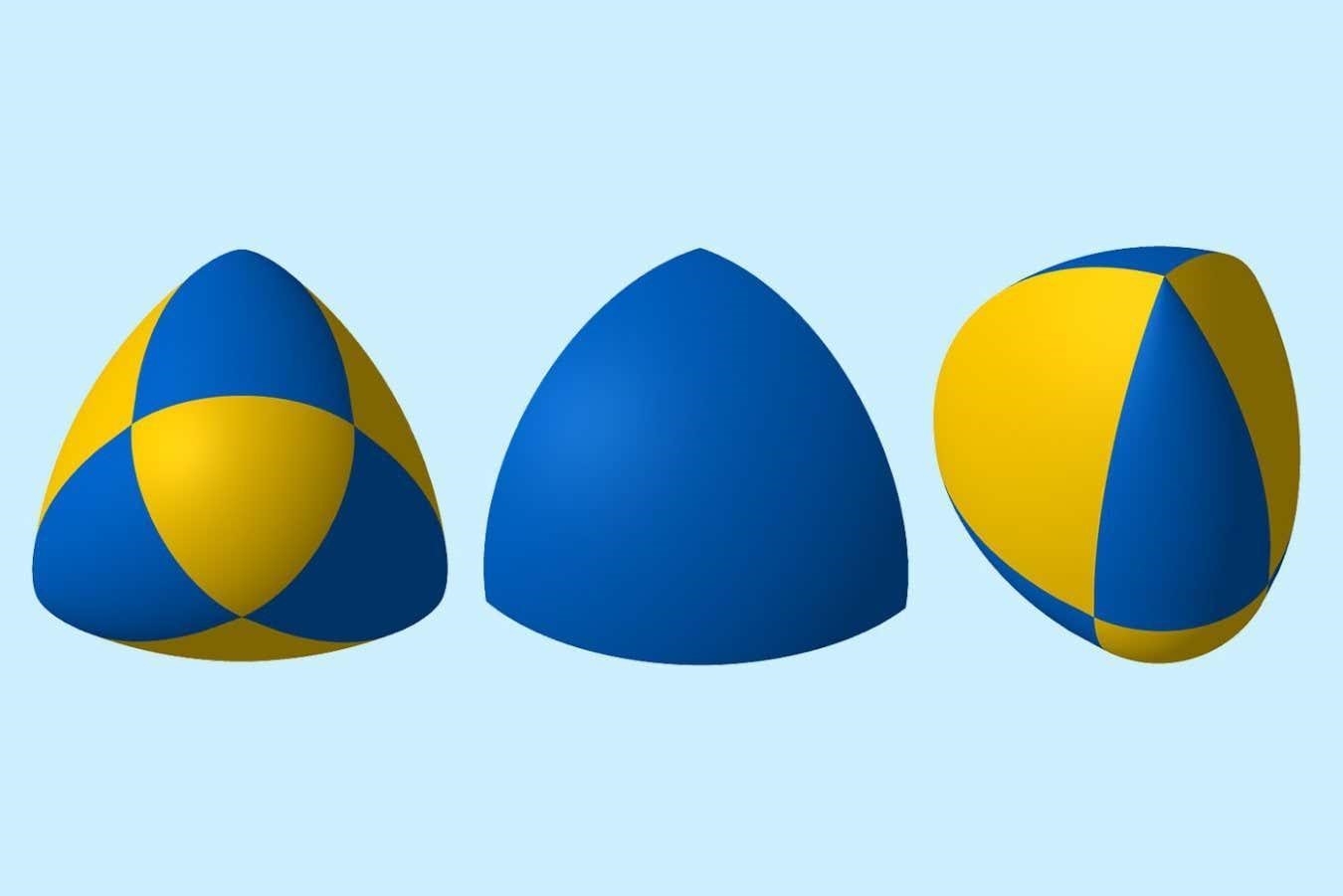

ومع ذلك، فإن اكتشافات القرن العشرين أعادت إدخال فكرة عدم اليقين إلى قواعد الكون. تؤكد الفيزياء الكمية أن نتيجة التجربة ليست محددة مسبقًا بحالتها الحالية. قد تتجه القطع في اللعبة في اتجاهات متعددة مختلفة وفقًا لانهيار دالة الموجة. على عكس ما اعتقده ألبرت أينشتاين، يبدو أن الله يلعب لعبة بالنرد.

حتى لو كانت اللعبة حتمية، فإن رياضيات نظرية الفوضى تشير أيضًا إلى أن اللاعبين والمراقبين لن يتمكنوا من معرفة الحالة الحالية للعبة بالتفصيل الكامل وأن الاختلافات الصغيرة في الحالة الحالية يمكن أن تؤدي إلى نتائج مختلفة جدًا.

أن تكون اللعبة غير منتجة هي صفة مثيرة للاهتمام. إذا لعبنا لعبة من أجل المال أو لتعليمنا شيئًا ما، اعتقد كايوا أن اللعبة أصبحت عملاً: اللعبة هي ”مناسبة للإهدار المحض: إهدار الوقت والطاقة والبراعة والمهارة“. لسوء الحظ، ما لم تؤمن بقوة أعلى، تشير كل الأدلة إلى عدم وجود غرض نهائي للكون. الكون ليس موجودًا لسبب ما. إنه موجود فقط.

الصفات الثلاث الأخرى التي يحددها كايوا ربما تنطبق بشكل أقل على الكون ولكنها تصف اللعبة كشيء متميز عن الكون، رغم أنها تسير بالتوازي معه. اللعبة منفصلة – تعمل خارج الزمان والمكان العاديين. للعبة مساحتها الخاصة المحددة التي تُلعب فيها ضمن حد زمني محدد. لها بدايتها الخاصة ونهايتها الخاصة. اللعبة هي استراحة من كوننا. إنها هروب إلى كون موازٍ.

حقيقة أن اللعبة يجب أن يكون لها نهاية مثيرة للاهتمام أيضًا. هناك مفهوم اللعبة اللانهائية التي قدمها الفيلسوف جيمس ب. كارس في كتابه الألعاب المحدودة واللانهائية. أنت لا تهدف للفوز في لعبة لانهائية. الفوز ينهي اللعبة وبالتالي يجعلها محدودة. بدلاً من ذلك، مهمة لاعب اللعبة اللانهائية هي استمرار اللعبة – التأكد من أنها لا تنتهي أبدًا. يختتم كارس كتابه بالعبارة الغامضة إلى حد ما، ”لا توجد سوى لعبة لانهائية واحدة.“ يدرك المرء أنه يشير إلى حقيقة أننا جميعًا لاعبون في اللعبة اللانهائية التي تجري من حولنا، اللعبة اللانهائية التي هي الكون. على الرغم من أن الفيزياء الحالية تفترض حركة نهائية: الموت الحراري للكون يعني أن هذا الكون قد يكون له نهاية لا يمكننا فعل أي شيء لتجنبها.

تشير صفة الخيال عند كايوا إلى فكرة أن الألعاب هي خيال. تتكون اللعبة من خلق واقع ثانٍ يسير بالتوازي مع الحياة الحقيقية. إنه كون خيالي يستحضره اللاعبون طواعية بشكل مستقل عن الواقع الصارم للكون المادي الذي نحن جزء منه.

أخيرًا، يعتقد كايوا أن اللعبة تتطلب الحرية. أي شخص يُجبر على لعب لعبة يعمل بدلاً من أن يلعب. لذلك، ترتبط اللعبة بجانب مهم آخر من الوعي البشري: إرادتنا الحرة.

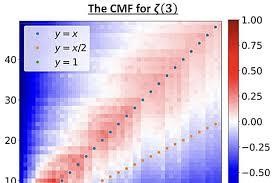

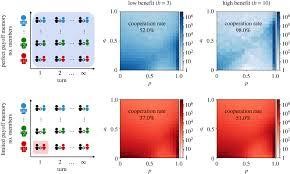

هذا يثير سؤالاً: إذا كان الكون لعبة، فمن الذي يلعبها وماذا سيعني الفوز؟ هل نحن مجرد بيادق في هذه اللعبة بدلاً من لاعبين؟ افترض البعض أن كوننا هو في الواقع محاكاة ضخمة. قام شخص ما ببرمجة القواعد، وإدخال بعض البيانات الأولية وترك المحاكاة تعمل. لهذا السبب تبدو لعبة الحياة لجون كونواي أقرب إلى نوع اللعبة التي قد يكون الكون عليها. في لعبة كونواي، تولد البكسلات على شبكة لانهائية، وتعيش وتموت وفقًا لبيئتها وقواعد اللعبة. كان نجاح كونواي في إنشاء مجموعة من القواعد التي أدت إلى مثل هذا التعقيد المثير للاهتمام.

إذا كان الكون لعبة، فيبدو أننا محظوظون جدًا لنجد أنفسنا جزءًا من لعبة لديها التوازن المثالي بين البساطة والتعقيد، الصدفة والاستراتيجية، الدراما والمخاطرة لجعلها مثيرة للاهتمام. حتى عندما نكتشف قواعد اللعبة، فإنها تعد بأن تكون مباراة رائعة حتى اللحظة التي تصل فيها إلى نهايتها.

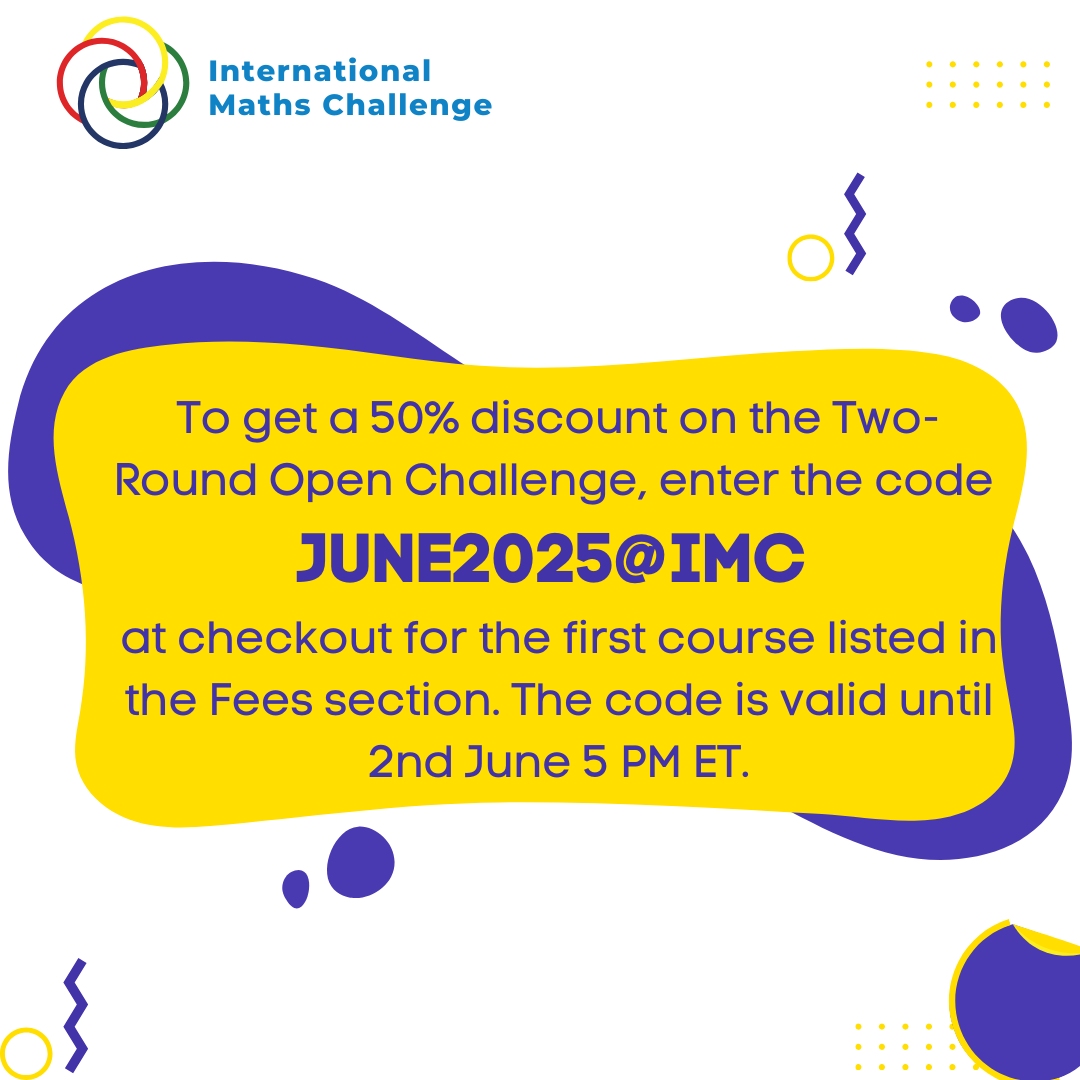

لمزيد من هذه الرؤى، قم بتسجيل الدخول إلى www.international-maths-challenge.com.

*الفضل في المقال يعود إلى ماركوس دو سوتوي*