Until recently, working out how three objects can stably orbit each other was nearly impossible, but now mathematicians have found a record number of solutions.

The motion of three objects is more complex than you might think

The question of how three objects can form a stable orbit around each other has troubled mathematicians for more than 300 years, but now researchers have found a record 12,000 orbital arrangements permitted by Isaac Newton’s laws of motion.

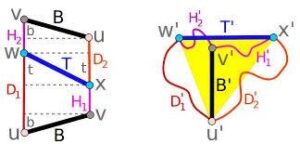

While mathematically describing the movement of two orbiting bodies and how each one’s gravity affects the other is relatively simple, the problem becomes vastly more complex once a third object is added. In 2017, researchers found 1223 new solutions to the three-body problem, doubling the number of possibilities then known. Now, Ivan Hristov at Sofia University in Bulgaria and his colleagues have unearthed more than 12,000 further orbits that work.

The team used a supercomputer to run an optimised version of the algorithm used in the 2017 work, discovering 12,392 new solutions. Hristov says that if he repeated the search with even more powerful hardware he could find “five times more”.

All the solutions found by the researchers start with all three bodies being stationary, before entering freefall as they are pulled towards each other by gravity. Their momentum then carries them past each other before they slow down, stop and are attracted together once more. The team found that, assuming there is no friction, this pattern would repeat infinitely.

Solutions to the three-body problem are of interest to astronomers, as they can describe how any three celestial objects – be they stars, planets or moons – can maintain a stable orbit. But it remains to be seen how stable the new solutions are when the tiny influences of additional, distant bodies and other real-world noise are taken into account.

“Their physical and astronomical relevance will be better known after the study of stability – it’s very important,” says Hristov. “But, nevertheless – stable or unstable – they are of great theoretical interest. They have a very beautiful spatial and temporal structure.”

Juhan Frank at Louisiana State University says that finding so many solutions in a precise set of conditions will be of interest to mathematicians, but of limited application in the real world.

“Most, if not all, require such precise initial conditions that they are probably never realised in nature,” says Frank. “After a complex and yet predictable orbital interaction, such three-body systems tend to break into a binary and an escaping third body, usually the least massive of the three.”

For more such insights, log into www.international-maths-challenge.com.

*Credit for article given to Matthew Sparkes *