Credit: Peter Dazeley Getty Images

Not getting a flu shot could endanger more than just one’s own health, herd immunity calculations show

As the annual flu season approaches, medical professionals are again encouraging people to get flu shots. Perhaps you are among those who rationalize skipping the shot on the grounds that “I never get the flu” or “if I get sick, I get sick” or “I’m healthy, so I’ll get over it.” What you might not realize is that these vaccination campaigns for flu and other diseases are about much more than your health. They’re about achieving a collective resistance to disease that goes beyond individual well-being—and that is governed by mathematical principles unforgiving of unwise individual choices.

When talking about vaccination and disease control, health authorities often invoke “herd immunity.” This term refers to the level of immunity in a population that’s needed to prevent an outbreak from happening. Low levels of herd immunity are often associated with epidemics, such as the measles outbreak in 2014-2015 that was traced to exposures at Disneyland in California. A study investigating cases from that outbreak demonstrated that measles vaccination rates in the exposed population may have been as low as 50 percent. This number was far below the threshold needed for herd immunity to measles, and it put the population at risk of disease.

The necessary level of immunity in the population isn’t the same for every disease. For measles, a very high level of immunity needs to be maintained to prevent its transmission because the measles virus is possibly the most contagious known organism. If people infected with measles enter a population with no existing immunity to it, they will on average each infect 12 to 18 others. Each of those infections will in turn cause 12 to 18 more, and so on until the number of individuals who are susceptible to the virus but haven’t caught it yet is down to almost zero. The number of people infected by each contagious individual is known as the “basic reproduction number” of a particular microbe (abbreviated R0), and it varies widely among germs. The calculated R0 of the West African Ebola outbreak was found to be around 2 in a 2014 publication, similar to the R0computed for the 1918 influenza pandemic based on historical data.

If the Ebola virus’s R0 sounds surprisingly low to you, that’s probably because you have been misled by the often hysterical reporting about the disease. The reality is that the virus is highly infectious only in the late stages of the disease, when people are extremely ill with it. The ones most likely to be infected by an Ebola patient are caregivers, doctors, nurses and burial workers—because they are the ones most likely to be present when the patients are “hottest” and most likely to transmit the disease. The scenario of an infectious Ebola patient boarding an aircraft and passing on the disease to other passengers is extremely unlikely because an infectious patient would be too sick to fly. In fact, we know of cases of travelers who were incubating Ebola virus while flying, and they produced no secondary cases during those flights.

Note that the R0 isn’t related to how severe an infection is, but to how efficiently it spreads. Ebola killed about 40 percent of those infected in West Africa, while the 1918 influenza epidemic had a case-fatality rate of about 2.5 percent. In contrast, polio and smallpox historically spread to about 5 to 7 people each, which puts them in the same range as the modern-day HIV virus and pertussis (the bacterium that causes whooping cough).

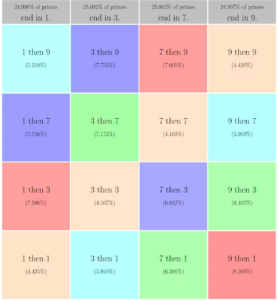

Determining the R0 of a particular microbe is a matter of more than academic interest. If you know how many secondary cases to expect from each infected person, you can figure out the level of herd immunity needed in the population to keep the microbe from spreading. This is calculated by taking the reciprocal of R0 and subtracting it from 1. For measles, with an R0 of 12 to 18, you need somewhere between 92 percent (1 – 1/12) and 95 percent (1 – 1/18) of the population to have effective immunity to keep the virus from spreading. For flu, it’s much lower—only around 50 percent. And yet we rarely attain even that level of immunity with vaccination.

Once we understand the concept of R0, so much about patterns of infectious disease makes sense. It explains, for example, why there are childhood diseases—infections that people usually encounter when young, and against which they often acquire lifelong immunity after the infections resolve. These infections include measles, mumps, rubella and (prior to its eradication) smallpox—all of which periodically swept through urban populations in the centuries prior to vaccination, usually affecting children.

Do these viruses have some unusual affinity for children? Before vaccination, did they just go away after each outbreak and only return to cities at approximately five- to 10-year intervals? Not usually. After a large outbreak, viruses linger in the population, but the level of herd immunity is high because most susceptible individuals have been infected and (if they survived) developed immunity. Consequently, the viruses spread slowly: In practice, their R0 is just slightly above 1. This is known as the “effective reproduction number”—the rate at which the microbe is actually transmitted in a population that includes both susceptible and non-susceptible individuals (in other words, a population where some immunity already exists). Meanwhile, new susceptible children are born into the population. Within a few years, the population of young children who have never been exposed to the disease dilutes the herd immunity in the population to a level below what’s needed to keep outbreaks from occurring. The virus can then spread more rapidly, resulting in another epidemic.

An understanding of the basic reproduction number also explains why diseases spread so rapidly in new populations: Because those hosts lack any immunity to the infection, the microbe can achieve its maximum R0. This is why diseases from invading Europeans spread so rapidly and widely among indigenous populations in the Americas and Hawaii during their first encounters. Having never been exposed to these microbes before, the non-European populations had no immunity to slow their spread.

If we further understand what constellation of factors contributes to an infection’s R0, we can begin to develop interventions to interrupt the transmission. One aspect of the R0 is the average number and frequency of contacts that an infected individual has with others susceptible to the infection. Outbreaks happen more frequently in large urban areas because individuals living in crowded cities have more opportunities to spread the infection: They are simply in contact with more people and have a higher likelihood of encountering someone who lacks immunity. To break this chain of transmission during an epidemic, health authorities can use interventions such as isolation (keeping infected individuals away from others) or even quarantine (keeping individuals who have been exposed to infectious individuals—but are not yet sick themselves—away from others).

Other factors that can affect the R0 involve both the host and the microbe. When an infected person has contact with someone who is susceptible, what is the likelihood that the microbe will be transmitted? Frequently, hosts can reduce the probability of transmission through their behaviors: by covering coughs or sneezes for diseases transmitted through the air, by washing their contaminated hands frequently, and by using condoms to contain the spread of sexually transmitted diseases.

These behavioral changes are important, but we know they’re far from perfect and not particularly efficient in the overall scheme of things. Take hand-washing, for example. We’ve known of its importance in preventing the spread of disease for 150 years. Yet studies have shown that hand-washing compliance even by health care professionals is astoundingly low — less than half of doctors and nurses wash their hands when they’re supposed to while caring for patients. It’s exceedingly difficult to get people to change their behavior, which is why public health campaigns built around convincing people to behave differently can sometimes be less effective than vaccination campaigns.

How long a person can actively spread the infection is another factor in the R0. Most infections can be transmitted for only a few days or weeks. Adults with influenza can spread the virus for about a week, for example. Some microbes can linger in the body and be transmitted for months or years. HIV is most infectious in the early stages when concentrations of the virus in the blood are very high, but even after those levels subside, the virus can be transmitted to new partners for many years. Interventions such as drug treatments can decrease the transmissibility of some of these organisms.

The microbes’ properties are also important. While hosts can purposely protect themselves, microbes don’t choose their traits. But over time, evolution can shape them in a manner that increases their chances of transmission, such as by enabling measles to linger longer in the air and allowing smallpox to survive longer in the environment.

By bringing together all these variables (size and dynamics of the host population, levels of immunity in the population, presence of interventions, microbial properties, and more), we can map and predict the spread of infections in a population using mathematical models. Sometimes these models can overestimate the spread of infection, as was the case with the models for the Ebola outbreak in 2014. One model predicted up to 1.4 million cases of Ebola by January 2015; in reality, the outbreak ended in 2016 with only 28,616 cases. On the other hand, models used to predict the transmission of cholera during an outbreak in Yemen have been more accurate.

The difference between the two? By the time the Ebola model was published, interventions to help control the outbreak were already under way. Campaigns had begun to raise awareness of how the virus was transmitted, and international aid had arrived, bringing in money, personnel and supplies to contain the epidemic. These interventions decreased the Ebola virus R0 primarily by isolating the infected and instituting safe burial practices, which reduced the number of susceptible contacts each case had. Shipments of gowns, gloves and soap that health care workers could use to protect themselves while treating patients reduced the chance that the virus would be transmitted. Eventually, those changes meant that the effective R0 fell below 1—and the epidemic ended. (Unfortunately, comparable levels of aid and interventions to stop cholera in Yemen have not been forthcoming.)

Catch-up vaccinations and the use of isolation and quarantine also likely helped to end the Disneyland measles epidemic, as well as a slightly earlier measles epidemic in Ohio. Knowing the factors that contribute to these outbreaks can aid us in stopping epidemics in their early stages. But to prevent them from happening in the first place, a population with a high level of immunity is, mathematically, our best bet for keeping disease at bay.

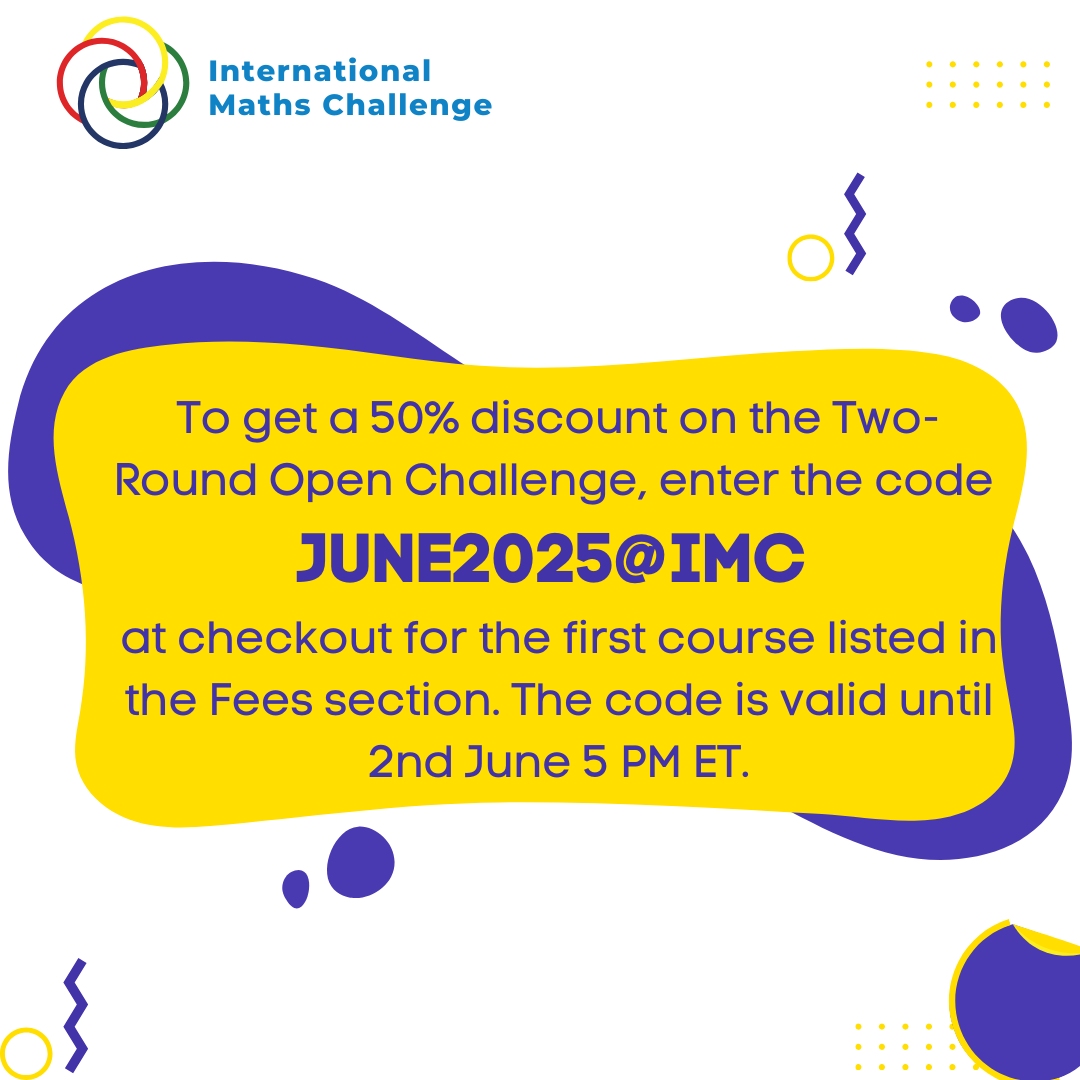

For more insights like this, visit our website at www.international-maths-challenge.com.

Credit of the article given to Tara C. Smith & Quanta Magazine