These are painful times for those hoping to see an international consensus and substantive action on global warming.

In the US, Republican presidential front-runner Mitt Romney said in June 2011: “The world is getting warmer” and “humans have contributed” but in October 2011 he backtracked to: “My view is that we don’t know what’s causing climate change on this planet.”

His Republican challenger Rick Santorum added: “We have learned to be sceptical of ‘scientific’ claims, particularly those at war with our common sense” and Rick Perry, who suspended his campaign to become the Republican presidential candidate last month, stated flatly: “It’s all one contrived phony mess that is falling apart under its own weight.”

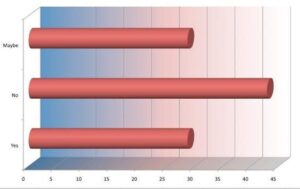

Meanwhile, the scientific consensus has moved in the opposite direction. In a study published in October 2011, 97% of climate scientists surveyed agreed global temperatures have risen over the past 100 years. Only 5% disagreed that human activity is a significant cause of global warming.

The study concluded in the following way: “We found disagreement over the future effects of climate change, but not over the existence of anthropogenic global warming.

“Indeed, it is possible that the growing public perception of scientific disagreement over the existence of anthropocentric warming, which was stimulated by press accounts of [the UK’s] ”Climategate“ is actually a misperception of the normal range of disagreements that may persist within a broad scientific consensus.”

More progress has been made in Europe, where the EU has established targets to reduce emissions by 20% (from 1990 levels) by 2020. The UK, which has been beset by similar denial movements, was nonetheless able to establish, as a legally binding target, an 80% reduction by 2050 and is a world leader on abatement.

In Australia, any prospect for consensus was lost when Tony Abbott used opposition to the Labor government’s proposed carbon market to replace Malcolm Turnbull as leader of the Federal Opposition in late 2009.

It used to be possible to hear right-wing politicians in Australia or the USA echo the Democratic congressman Henry Waxman who said last year:

“If my doctor told me I had cancer, I wouldn’t scour the country to find someone to tell me that I don’t need to worry about it.”

But such rationality has largely left the debate in both the US and Oz. In Australia, a reformulated carbon tax policy was enacted in November only after a highly partisan debate.

In Canada, the debate is a tad more balanced. The centre-right Liberal government in British Columbia passed the first carbon tax in North America in 2008, but the governing Federal Conservative party now offers a reliable “anti-Kyoto” partnership with Washington.

Overviews of the evidence for global warming, together with responses to common questions, are available from various sources, including:

- Seven Answers to Climate Contrarian Nonsense, in Scientific American

- Climate change: A Guide for the Perplexed, in New Scientist

- Cooling the Warming Debate: Major New Analysis Confirms That Global Warming Is Real, in Science Daily

- Remind me again: how does climate change work? on The Conversation

It should be acknowledged in these analyses that all projections are based on mathematical models with a significant level of uncertainty regarding highly complex and only partially understood systems.

As 2011 Australian Nobel-Prize-winner Brian Schmidt explained while addressing a National Forum on Mathematical Education:

“Climate models have uncertainty and the earth has natural variation … which not only varies year to year, but correlates decade to decade and even century to century. It is really hard to design a figure that shows this in a fair way — our brain cannot deal with the correlations easily.

“But we do have mathematical ways of dealing with this problem. The Australian academy reports currently indicate that the models with the effects of CO₂ are with 90% statistical certainty better at explaining the data than those without.

“Most of us who work with uncertainty know that 90% statistical uncertainty cannot be easily shown within a figure — it is too hard to see …”

“ … Since predicting the exact effects of climate change is not yet possible, we have to live with uncertainty and take the consensus view that warming can cover a wide range of possibilities, and that the view might change as we learn more.”

But uncertainty is no excuse for inaction. The proposed counter-measures (e.g. infrastructure renewal and modernisation, large-scale solar and wind power, better soil remediation and water management, not to mention carbon taxation) are affordable and most can be justified on their own merits, while the worst-case scenario — do nothing while the oceans rise and the climate changes wildly — is unthinkable.

Some in the first world protest that any green energy efforts are dwarfed by expanding energy consumption in China and elsewhere. Sure, China’s future energy needs are prodigious, but China also now leads the world in green energy investment.

By blaiming others and focusing the debate on the level of human responsibility for warming and about the accuracy of predictions, the deniers have managed to derail long-term action in favour of short-term economic policies.

Who in the scientific community is promoting the denial of global warming? As it turns out, the leading figures in this movement have ties to conservative research institutes funded mostly by large corporations, and have a history of opposing the scientific consensus on issues such as tobacco and acid rain.

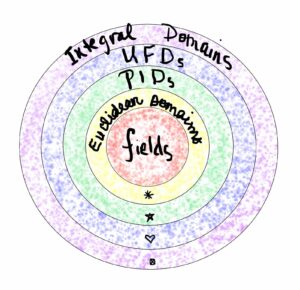

What’s more, those who lead the global warming denial movement – along with creationists, intelligent design writers and the “mathematicians” who flood our email inboxes with claims that pi is rational or other similar nonsense – are operating well outside the established boundaries of peer-reviewed science.

Austrian-born American physicist Fred Singer, arguably the leading figure of the denial movement, has only six peer-reviewed publications in the climate science field, and none since 1997.

After all, when issues such as these are “debated” in any setting other than a peer-reviewed journal or conference, one must ask: “If the author really has a solid argument, why isn’t he or she back in the office furiously writing up this material for submission to a leading journal, thereby assuring worldwide fame and glory, not to mention influence?”

In most cases, those who attempt to grab public attention through other means are themselves aware they are short-circuiting the normal process, and that they do not yet have the sort of solid data and airtight arguments that could withstand the withering scrutiny of scientific peer review.

When they press their views in public to a populace that does not understand how the scientific enterprise operates, they are being disingenuous.

With regards to claims scientists are engaged in a “conspiracy” to hide the “truth” on an issue such as global warming or evolution, one should ask how a secret “conspiracy” could be maintained in a worldwide, multicultural community of hundreds of thousands of competitive researchers.

As Benjamin Franklin wrote in his Poor Richard’s Almanac: “Three can keep a secret, provided two of them are dead.” Or as one of your present authors quipped, tongue-in-cheek, in response to a state legislator who was skeptical of evolution: “You have no idea how humiliating this is to me — there is a secret conspiracy among leading scientists, but no-one deemed me important enough to be included!”

There’s another way to think about such claims: we have tens-of-thousands of senior scientists in their late-fifties or early-sixties who have seen their retirement savings decimated by the recent stock market plunge. These are scientists who now wonder if the day will ever come when they are financially well-off-enough to do their research without the constant stress and distraction of applying for grants (the majority of which are never funded).

All one of these scientists has to do to garner both worldwide fame and considerable fortune (through book contracts, the lecture circuit and TV deals) is to call a news conference and expose “the truth”. So why isn’t this happening?

The system of peer-reviewed journals and conferences sponsored by major professional societies is the only proper forum for the presentation and debate of new ideas, in any field of science or mathematics.

It has been stunningly successful: errors have been uncovered, fraud has been rooted out and bogus scientific claims (such as the 1903 N-ray claim, the 1989 cold fusion claim, and the more-recent assertion of an autism-vaccination link) have been debunked.

This all occurs with a level of reliability and at a speed that is hard to imagine in other human endeavours. Those who attempt to short-circuit this system are doing potentially irreparable harm to the integrity of the system.

They may enrich themselves or their friends, but they are doing grievous damage to society at large.

For more such insights, log into our website https://international-maths-challenge.com

Credit of the article given to Jonathan Borwein (Jon), University of Newcastle and David H. Bailey, University of California, Davis