A major study of recent international data on school mathematics performance casts doubt on some common assumptions about gender and math achievement — in particular, the idea that girls and women have less ability due to a difference in biology.

“We tested some recently proposed hypotheses that try to explain a supposed gender gap in math performance and found the data did not support them,” says Janet Mertz, senior author of the study and a professor of oncology at the University of Wisconsin-Madison.

Instead, the Wisconsin researchers linked differences in math performance to social and cultural factors.

The new study, by Mertz and Jonathan Kane, a professor of mathematical and computer sciences at the University of Wisconsin-Whitewater, was published in Dec 2011 in Notices of the American Mathematical Society. The study looked at data from 86 countries, which the authors used to test the “greater male variability hypothesis” famously expounded in 2005 by Lawrence Summers, then president of Harvard, as the primary reason for the scarcity of outstanding women mathematicians.

That hypothesis holds that males diverge more from the mean at both ends of the spectrum and, hence, are more represented in the highest-performing sector. But, using the international data, the Wisconsin authors observed that greater male variation in math achievement is not present in some countries, and is mostly due to boys with low scores in some other countries, indicating that it relates much more to culture than to biology.

The new study relied on data from the 2007 Trends in International Mathematics and Science Study and the 2009 Programme in International Student Assessment.

“People have looked at international data sets for many years”, Mertz says. “What has changed is that many more non-Western countries are now participating in these studies, enabling much better cross-cultural analysis.”

The Wisconsin study also debunked the idea proposed by Steven Levitt of “Freakonomics” fame that gender inequity does not hamper girls’ math performance in Muslim countries, where most students attend single-sex schools. Levitt claimed to have disproved a prior conclusion of others that gender inequity limits girls’ mathematics performance. He suggested, instead, that Muslim culture or single-sex classrooms benefit girls’ ability to learn mathematics.

By examining the data in detail, the Wisconsin authors noted other factors at work. “The girls living in some Middle Eastern countries, such as Bahrain and Oman, had, in fact, not scored very well, but their boys had scored even worse, a result found to be unrelated to either Muslim culture or schooling in single-gender classrooms,” says Kane.

He suggests that Bahraini boys may have low average math scores because some attend religious schools whose curricula include little mathematics. Also, some low-performing girls drop out of school, making the tested sample of eighth graders unrepresentative of the whole population.

“For these reasons, we believe it is much more reasonable to attribute differences in math performance primarily to country-specific social factors,” Kane says.

To measure the status of females relative to males within each country, the authors relied on a gender-gap index, which compares the genders in terms of income, education, health and political participation. Relating these indices to math scores, they concluded that math achievement at the low, average and high end for both boys and girls tends to be higher in countries where gender equity is better. In addition, in wealthier countries, women’s participation and salary in the paid labor force was the main factor linked to higher math scores for both genders.

“We found that boys — as well as girls — tend to do better in math when raised in countries where females have better equality, and that’s new and important,” says Kane. “It makes sense that when women are well-educated and earn a good income, the math scores of their children of both genders benefit.”

Mertz adds, “Many folks believe gender equity is a win-lose zero-sum game: If females are given more, males end up with less. Our results indicate that, at least for math achievement, gender equity is a win-win situation.”

U.S. students ranked only 31st on the 2009 Programme in International Student Assessment, below most Western and East-Asian countries. One proposed solution, creating single-sex classrooms, is not supported by the data. Instead, Mertz and Kane recommend increasing the number of math-certified teachers in middle and high schools, decreasing the number of children living in poverty and ensuring gender equality.

“These changes would help give all children an optimal chance to succeed,” says Mertz. “This is not a matter of biology: None of our findings suggest that an innate biological difference between the sexes is the primary reason for a gender gap in math performance at any level. Rather, these major international studies strongly suggest that the math-gender gap, where it occurs, is due to sociocultural factors that differ among countries, and that these factors can be changed.”

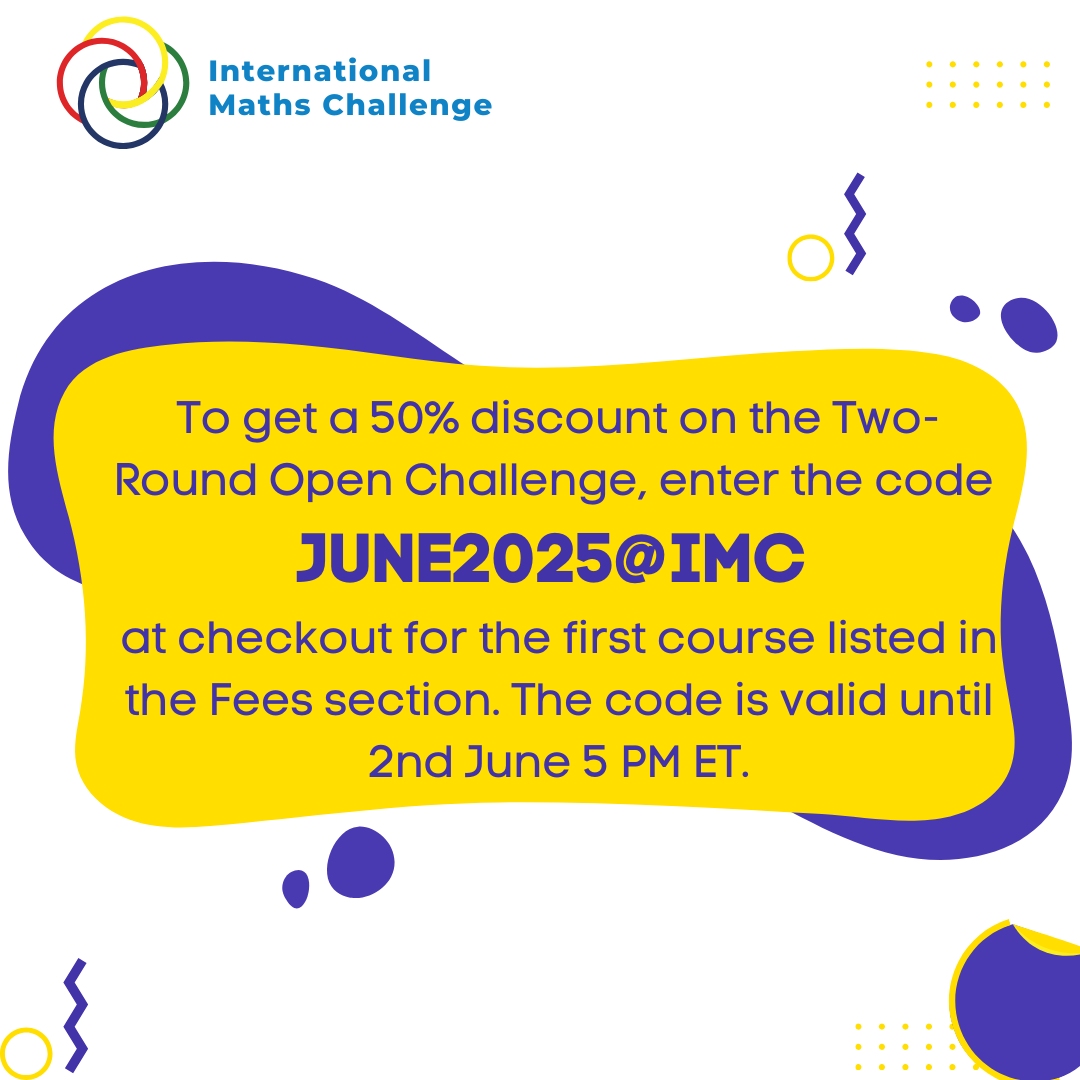

For more such insights, log into our website https://international-maths-challenge.com

Credit of the article given to University of Wisconsin-Madison