Credit: Unsplash/CC0 Public Domain

In just a few months, voters across America will head to the polls to decide who will be the next U.S. president. A new study draws on mathematics to break down how humans make decisions like this one.

The researchers, including Zachary Kilpatrick, an applied mathematician at CU Boulder, developed mathematical tools known as models to simulate the deliberation process of groups of people with various biases. They found that decision-makers with strong, initial biases were typically the first ones to make a choice.

“If I want good quality feedback, maybe I should look to people who are a little bit more deliberate in their decision making,” said Kilpatrick, a co-author of the new study and associate professor in the Department of Applied Mathematics. “I know they’ve taken their due diligence in deciding.”

The researchers, led by Samatha Linn of the University of Utah, published their findings August 12 in the journal Physical Review E.

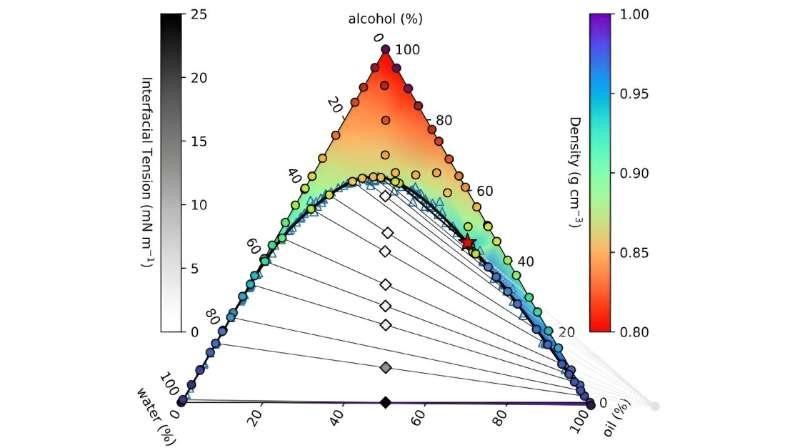

In the team’s models, mathematical decision-makers, or “agents,” gather information from the outside world until, ultimately, they make a choice between two options. That might include getting pizza or Thai food for dinner or coloring in the bubble for one candidate versus the other.

The team discovered that when agents started off with a big bias (say, they really wanted pizza), they also made their decisions really quickly—even if those decisions turned out to run contrary to the available evidence (the Thai restaurant got much better reviews). Those with smaller biases, in contrast, often took so long to deliberate that their initial preconceptions were washed away entirely.

The results are perhaps not surprising, depending on your thoughts about human nature. But they can help to reveal the mathematics behind how the brain works when it needs to make a quick choice in the heat of the moment—and maybe even more complicated decisions like who to vote for.

“It’s like standing on a street corner and deciding in a split second whether you should cross,” he said. “Simulating decision making gets a little harder when it’s something like, ‘Which college should I go to?'”

Pouring water

To understand how the team’s mathematical agents work, it helps to picture buckets. Kilpatrick and his colleagues typically begin their decision-making experiments by feeding their agents information over time, a bit like pouring water into a mop pail. In some cases, that evidence favours one decision (getting pizza for dinner), and in others, the opposite choice (Thai food). When the buckets fill to the brim, they tip over, and the agent makes its decision.

In their experiment, the researchers added a twist to that set up: They filled some of their buckets part way before the simulations began. Those agents, like many humans, were biased.

The team ran millions of simulations including anywhere from 10 to thousands of agents. The researchers were also able to predict the behaviour of the most and least biased agents by hand using pen, paper and some clever approximations.

A pattern began to emerge: The agents that started off with the biggest bias, or were mostly full of water to begin with, were the first to tip over—even when the preponderance of evidence suggested they should have chosen differently. Those agents who began with only small biases, in contrast, seemed to take time to weigh all of the available evidence, then make the best decision available.

“The slowest agent to make a decision tended to make decisions in a way very similar to a completely unbiased agent,” Kilpatrick said. “They pretty much behaved as if they started from scratch.”

Neighbourhood choices

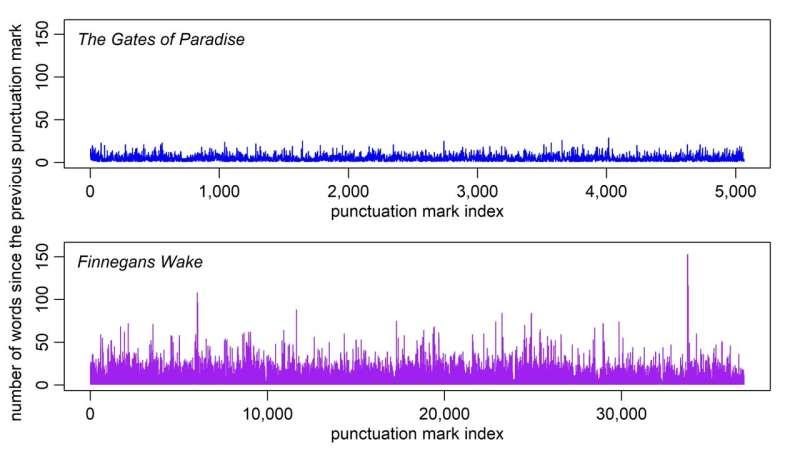

He noted that the study had some limitations. In the team’s experiments, for example, none of the agents knew what the others were doing. Kilpatrick compared it to neighbours staying inside their homes during an election year, not talking about their choices or putting up yard signs. In reality, humans often change their decisions based on the actions of their friends and neighbours.

Kilpatrick hopes to run a similar set of experiences in which the agents can influence each other’s behaviours.

“You might speculate that if you had a large group coupled together, the first agent to make a decision could kick off a cascade of potentially wrong decisions,” he said.

Still, political pollsters may want to take note of the team’s results.

“The study could also be applied to group decision making in human organizations where there’s democratic voting, or even when people give their input in surveys,” Kilpatrick said. “You might want to look at folks carefully if they give fast responses.”

For more such insights, log into our website https://international-maths-challenge.com

Credit of the article given to Daniel Strain, University of Colorado at Boulder